A Better Way to Build Code Sets

If you work with healthcare claims data, you’ve built code sets. Maybe it was a list of ICD-10-CM codes for diabetes, or heart failure, or chronic kidney disease. And if you have, you know that there has to be a better way to do this. All of the tools that exist are for billing purposes, not for research.

The traditional approach is some combination of clinical knowledge, keyword searches through code descriptions, and peer-reviewed literature. You open a reference table, search for “diabetes,” scroll through hundreds of results, and try to decide which codes belong and which don’t. It’s tedious, it’s error-prone, and two analysts working on the same condition can produce different code sets. That divergence matters — it can affect who ends up in the study cohort, and/or the inferences drawn from the results.

The Problem Is Bigger Than It Looks

ICD-10-CM has roughly 97,000 codes. They’re organized hierarchically, which helps, but the hierarchy is deep and full of clinical nuance. Take type 2 diabetes: the E11 family alone contains codes for ophthalmic, neurological, circulatory, renal, and dermatological complications, each with multiple subcategories. A keyword search for “kidney” won’t find codes described as “renal” or “nephropathy.” And even when you find the right family, you still have to decide which subcategories are relevant to your specific research question.

There are workarounds — reusing code sets from prior studies, borrowing from published literature, or building from institutional templates. They work, but they’re hard to audit, hard to reproduce, and tend to drift over time as analysts make small, undocumented adjustments.

Semantic Search Changes the Game

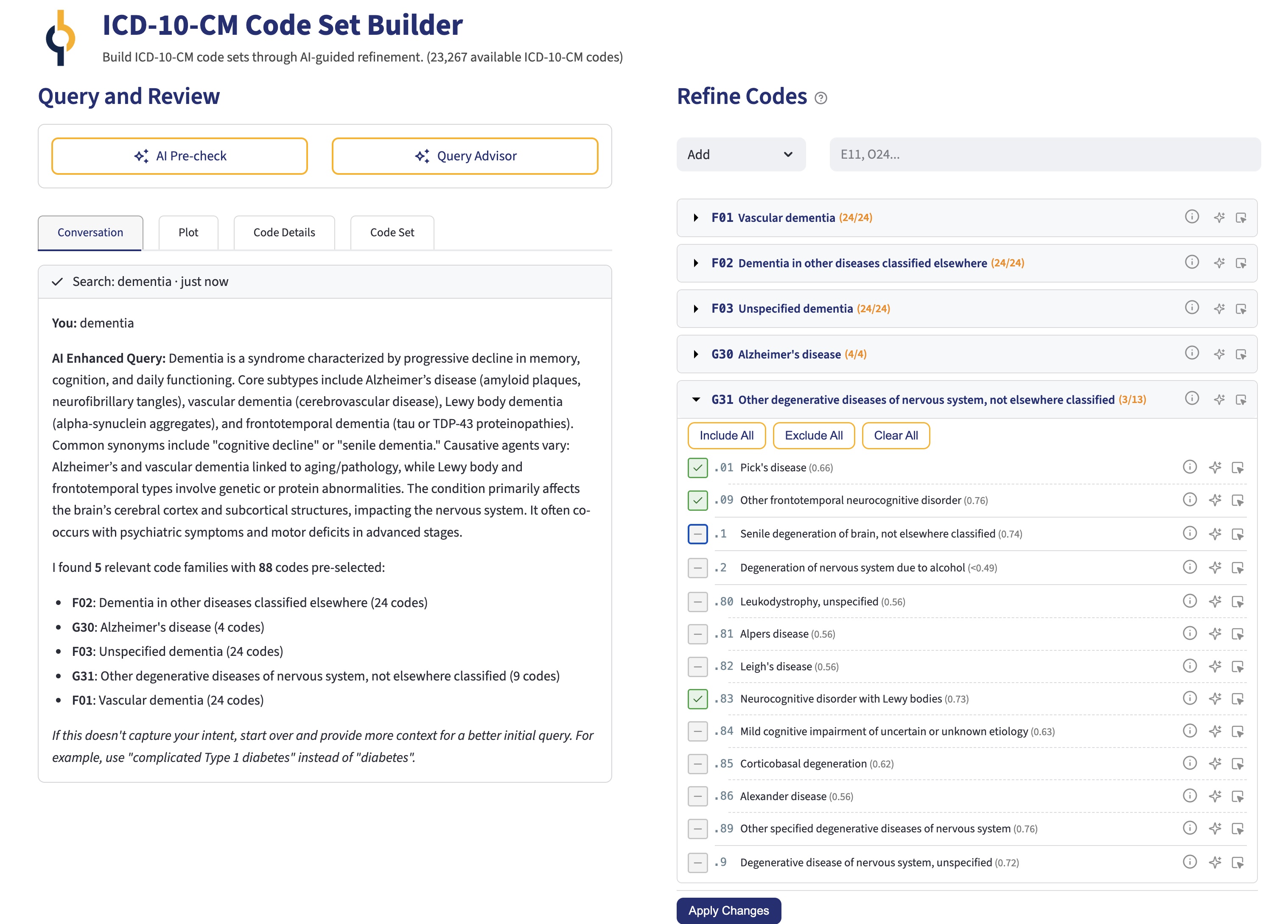

We’ve been building a tool that takes a fundamentally different approach. Instead of searching code descriptions with keywords, it searches code meaning with natural language.

The system encodes every ICD-10-CM code as a high-dimensional vector — a mathematical representation of its clinical meaning, informed by the code’s description, its position in the ICD-10 hierarchy, and LLM-generated clinical context. A query like “type 2 diabetes with kidney complications” returns codes that are semantically close to the intended meaning, not just codes that happen to contain matching words.

This is the same class of technology behind modern search engines and recommendation systems, applied to a very specific and important problem: identifying the right ICD-10-CM codes for a research project.

Not Just Search — a Feedback Loop

Semantic search is a good starting point, but a starting point isn’t a finished code set. The real value comes from what happens next.

The tool groups results into ICD10 code families defined by their 3 character group (e.g., E11). Then the tool scores them by relevance which is a measue of how close they are to your original query. Then, it identifies a natural cutoff between clearly relevant and probably irrelevant families. From there, the user reviews individual codes and marks them as include, exclude, or “unsure”. Each include or exclude decision feeds back into the algorithm through a process called Rocchio relevance feedback — the system adjusts its internal representation of the query based on those choices, pushing away from excluded codes and towards the included ones. It then re-searches with a refined understanding of what the user is looking for.

This creates an iterative refinement loop. With each round, the results get more precise. New families surface that the user might not have considered. Irrelevant ones drop away. The process is reproducible and auditable — a researcher can explain to a reviewer exactly how and why each code ended up in the set.

There is also a graphical feature that shows how close the codes are from each other in meaning, so you can get visual feedback on what is happening.

AI Assistance Where It Helps

We’ve layered optional LLM features on top of this core workflow. Before the user starts reviewing codes, an AI pre-check can classify subcategories as likely relevant, likely irrelevant, or uncertain — giving a head start on the manual review. A query advisor can flag unexpected code families and ask clarifying questions about intent. And an explain feature can break down what a specific code represents and why it might or might not belong.

These features are genuinely optional. The semantic search and Rocchio refinement work without any LLM. But when available, they reduce the cognitive load of sorting through hundreds of subcategories.

Harmonization: Comparing Code Sets

We also built a harmonization tool for a problem that sometimes arises in practice: there are two code sets for the same condition, built by different analysts or drawn from different sources, and there is a need to reconcile them. The tool shows the user what’s in both sets, what’s unique to each, and lets the user build a merged set with full visibility into the differences.

Case Study: Dementia in the Charlson Comorbidity Index

To make this concrete, consider a code set that thousands of researchers use routinely: the dementia algorithm from the Charlson Comorbidity Index.

The ICD-10 version of the Charlson index comes from Quan et al. (2005), a carefully conducted study that translated the original ICD-9-CM algorithms into ICD-10 using a multi-step consensus process across research groups in three countries. The dementia algorithm they published includes codes from four ICD-10 families: F00 (dementia in Alzheimer’s disease), F01–F03 (vascular, other, and unspecified dementia), G30 (Alzheimer’s disease), and G31.1 (senile degeneration of brain). It’s a reasonable list — and it’s been cited thousands of times.

But for people working with US claims data coded in ICD-10-CM, this list has problems.

First, F00 doesn’t exist in ICD-10-CM. The US clinical modification never adopted that code. In ICD-10-CM, Alzheimer’s disease with documented dementia is typically coded with a G30.- code for the underlying Alzheimer’s disease plus an F02.80/F02.81- code for the dementia manifestation. A researcher who takes the Quan codes at face value and searches for F00 in US claims data will find zero patients — not because there are no Alzheimer’s patients, but because the code doesn’t exist in the system they’re searching.

Second, and more consequentially, the algorithm misses entire categories of dementia that are now explicitly coded in ICD-10-CM:

- G31.0x — Frontotemporal dementia, including Pick’s disease and other frontotemporal variants. This is a clinically significant dementia subtype with its own family of codes.

- G31.83 — Neurocognitive disorder with Lewy bodies, which captures dementia with Lewy bodies as a distinct neurodegenerative disorder, and was introduced in ICD-10-CM after the Quan paper was published.

These aren’t obscure edge cases. Lewy body dementia accounts for an estimated 3–7% of all dementia cases. Frontotemporal dementia is a leading cause of dementia in people under age 60. Missing them means missing patients — exactly the kind of systematic gap that introduces bias into observational studies.

What the Tool Finds

When we run the query “dementia” through our Code Set Builder, semantic search surfaces exactly the families you’d expect: F01, F02, F03, and G30 — the core of the Quan algorithm. But it also identifies G31, scored highly because codes like G31.0x (frontotemporal dementia) and G31.83 (Neurocognitive disorder with Lewy bodies) are semantically close to the query. The tool doesn’t just match the word “dementia” in code descriptions — it understands that these conditions are dementias, even when the description reads “frontotemporal disease” or uses other clinical terminology.

From there, the AI pre-check step can help sort out which G31 subcategories belong (G31.0x, G31.83 — yes; G31.2, spinocerebellar degeneration — probably not), and iterative refinement allows fine-tuning based on the specific research question. The result is a code set that’s more comprehensive than Quan, specifically adapted to ICD-10-CM, and documented through every step.

This isn’t a criticism of Quan et al. — their work was rigorous and remains foundational. The point is that code sets built by manual translation twenty years ago inevitably have gaps, especially when the underlying coding system has continued to evolve. A tool that starts from semantic meaning rather than code-to-code translation can identify what manual processes miss.

Why This Matters

Code set construction is foundational work in observational research. It determines who’s in your study population and who’s not. Getting it wrong — in either direction — compromises everything downstream. Yet we’ve been treating it as an artisanal process, relying on individual expertise and ad hoc methods.

This tool doesn’t replace clinical judgment. It augments it with semantic understanding, algorithmic feedback, and AI assistance, producing code sets that are more comprehensive, more precise, and more defensible than what most of us can build by hand.

We’re using it internally at Outcomes Insights, and we’re excited about where it’s headed. In fact we were inspired to work on similar projects to identify medications (NDC and HCPCS codes) and procedures (CPT and HCPCS codes) based on disease areas.

Preview

by Outcomes Insights, Inc.

by Outcomes Insights, Inc.